Rotoscoping RAM

Alchemizing a filmmaking failure into literal wins.

An earlier version of this article was previously published under the same title on Medium.

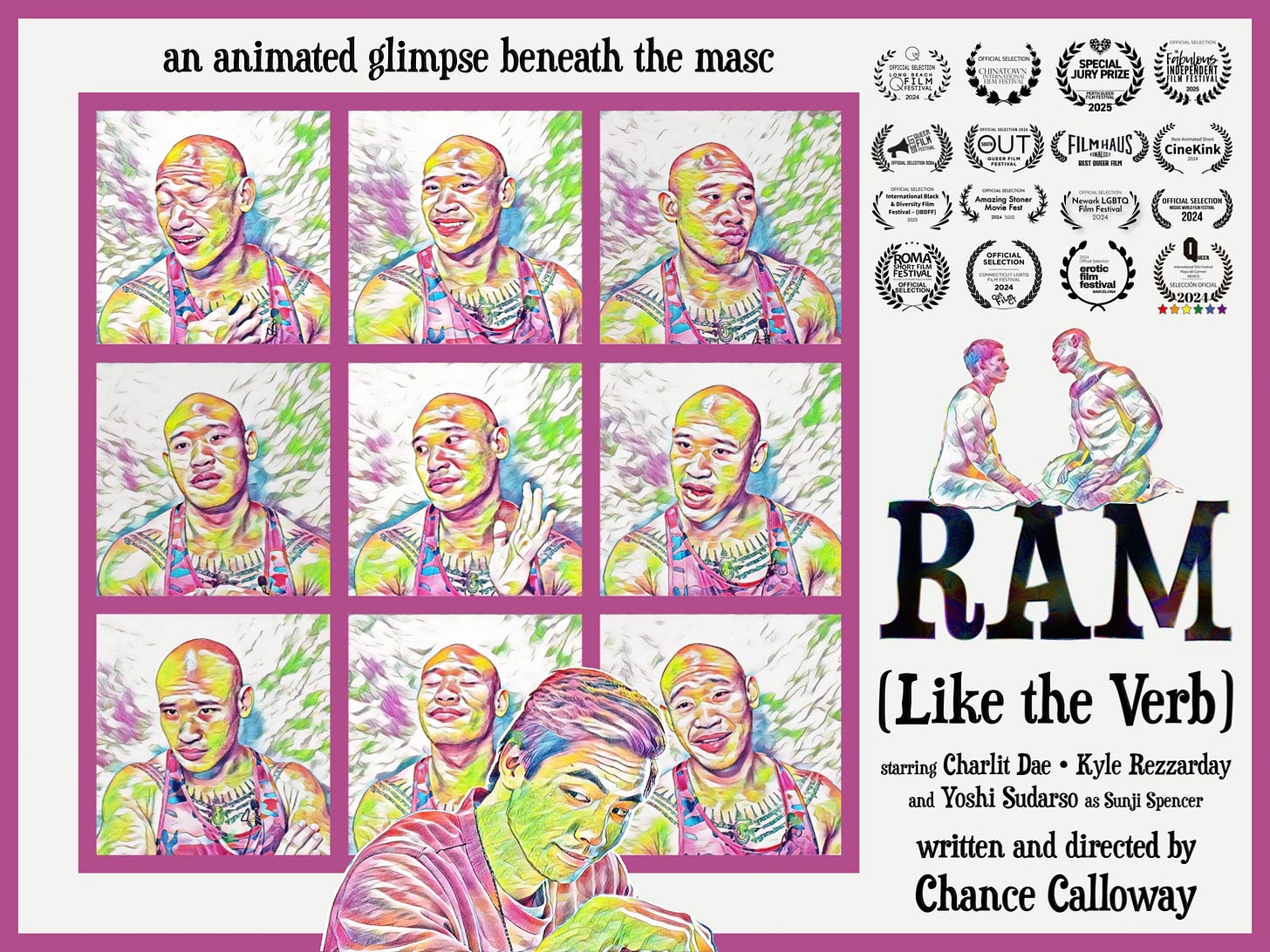

For the last two years, my short film RAM (Like the Verb) has been screening at film festivals around the world, challenging perspectives on masculinity and racial dynamics in sexual relationships. It’s a rotoscoped animated film, delivered in pastels as vibrant as the titular character's personality, embodied by Charlit Dae. The film was born out of the remains of the unfinished second season of my series Pretty Dudes, which was hit by an onslaught of scheduling conflicts and a worldwide pandemic. Since I had poured thousands of crowdfunded dollars into filming an expansive story that no one would get to see, I wanted to ensure all of that effort was preserved somehow, and, after several years, RAM is the atypical result.

There’s a reason this process took me over three years to complete. At the start of the pandemic-prompted lockdown, I played around with taking each still frame from the “How We Break” music video and animating them individually, and that took several weeks, and I wasn’t too pleased with the results. The GIF below of Dae and Christian Olivo is from one of the clips I animated that way.

In my opinion, this effect was purposefully glitchy at best, unintentionally distracting at worst, especially with longer shots. Depending on the light and shadow from one frame to the next, the coloring could drastically and distractingly change with no smoothness in transition. After viewing a few converted clips and listening to my complaints about what a time- and storage-consuming nightmare my pandemic project was turning into, my buddy Alan recommended that I look into example-based synthesis technology.

There’s a reason this process took me over three years to complete.

This was a fascinating option to me. Instead of animating each frame individually, I animated what are called “keyframes,” or frames from the video that the surrounding frames copied their animation from. Essentially, the keyframes are the “example,” and what happens with the surrounding frames is the “synthesis.” It’s not AI, as it’s not creating anything from scratch. Succinctly, if it’s not in the keyframe, then it doesn’t exist to the technology. So if there are significant changes in a scene, like an actor entering the frame or turning their head, I need multiple keyframes to ensure the visual is still clean, instead of turning into a blurry mess. (Which did happen on occasion! We’ll get to that in a moment.) Compare the GIF below to the one above.

Much smoother, right? So instead of returning to the music video (which was already completed, and had also won co-director Gerry Maravilla and me some hardware), I decided instead to use the synthesis technology on a short film, briefly attempting to animate Pretty Dudes: The Double Entendre before choosing to match footage that wasn’t initially intended for the same video.

Examples: there’s a sequence where Ram (Dae) shares some career misgivings with fellow actor Sunji Spencer (Yoshi Sudarso) that was filmed for a Sunji-centric episode of season two, as well as a sequence originally intended for a separate episode where Ram discusses impending coitus with his boyfriend Alexander (Kyle Rezzarday).

My limitations with the synthesis technology had to be taken into consideration, creating a need for certain alterations in the editing bay. Some necessary pieces of audio were unfortunately paired with unusable visuals, requiring those lines and adjacent jokes — and on a few occasions, complete subplots — to be cut. This included removing the entirety of the original third act.

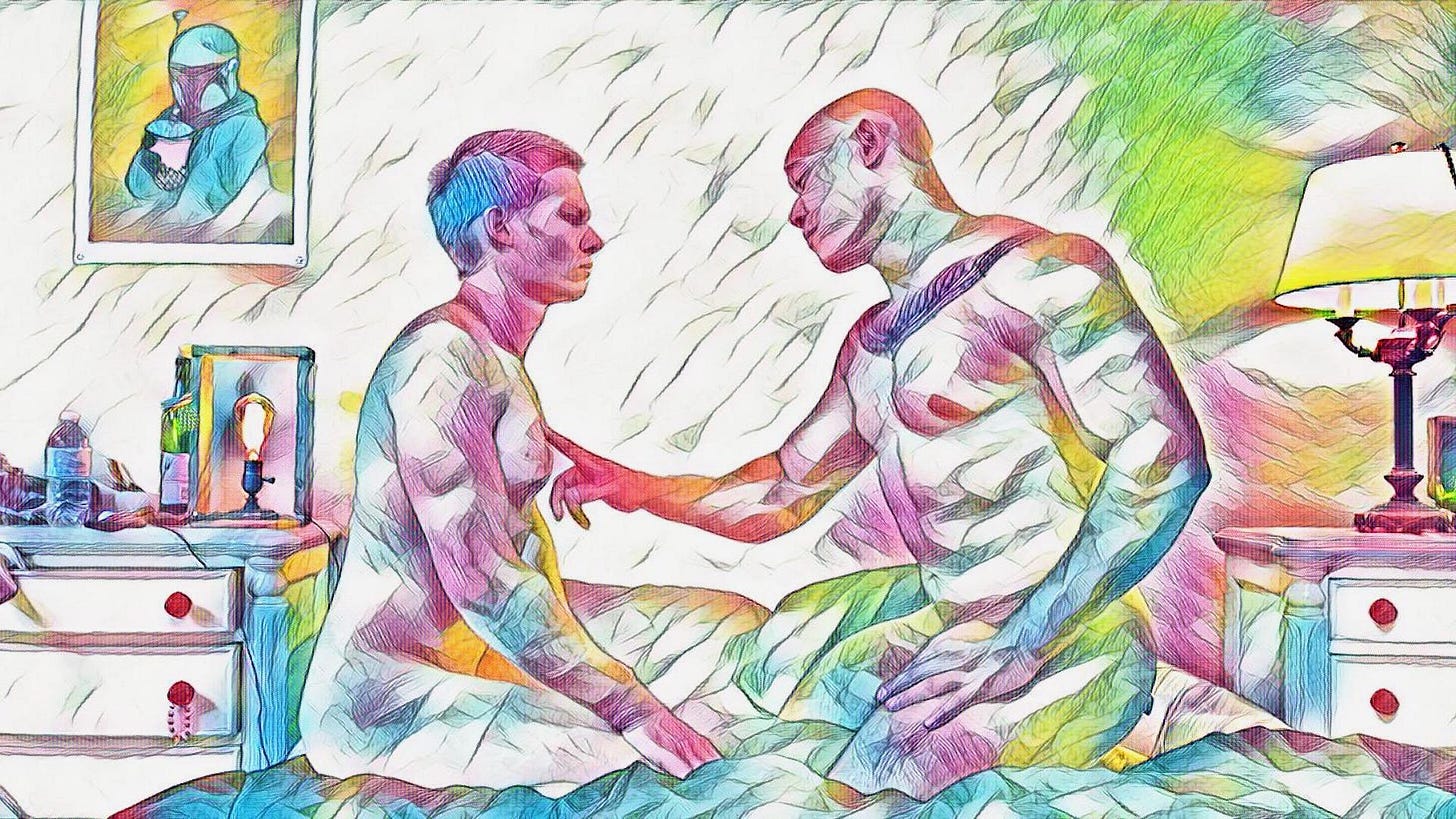

Both Ram and Alexander enter the frame early in a pivotal third-act sequence, and, in the case of Alexander, it really looks like someone just smears color across the screen, and no amount of keyframes could fix it without essentially resorting to the earlier option of animating every single frame. Conversely, the remainder of the scene, which worked so well in live action due to its focus on dialogue and emotion, did not lend itself well to animation due to its stagnant framing and frequent (as in every few frames) blurring of faces and arms due to the scene intentionally being lit darkly to express nighttime. The scene journeyed from not being visually salvageable to not being visually interesting, before jumping to an intimate moment between the two characters that was so, ahem, vigorous, that the technology had a difficult time tracking hands and heads and asscheeks. So I cut the entire thing. When looking at the example below, take into consideration that this was the result of several months of work on this scene.

The GIF above illustrates some of the issues I was able to mask in other scenes. Notice the posters, or the edges of the frame, when the camera moves. All of this would have been easier to adjust if the scenes were originally filmed with rotoscoping in mind, but since they weren’t, the entire scene needed to be scrapped. I didn’t want the audience concerned with those types of visual anomalies at the expense of engagement with the characters and Ram’s journey.

…art endures, no matter the circumstances surrounding the creation

Looking beyond the hands-on aspects, the apps I used completely ate up my computer’s RAM (har, har) to the point that whenever more than three keyframes were being used for synthesis, I couldn’t get any other apps to respond faster than a sleeping sloth. Take that knowledge and add the fact that I was unhoused and bouncing around from location to location while animating the film. Having my laptop hooked up to external drives and running (HOT AND LOUD) all hours of the day just wasn’t feasible, so animation was touch and go until I was able to move into my own place and eventually get a desktop to handle all the synthesis heavy lifting, enabling me to do other things on my laptop… Like, literally everything else.

Once the animated frames were complete, I focused on the transitions between keyframes to enable fluidity within individual cuts. With a project that started with 21,999 individual frames, well, that’s what took an additional two years. I don’t even know how many keyframes I ended up using, but it was more than 400.

That’s a brief (believe me!) look into what it took to rotoscope RAM (Like the Verb). Since its premiere at the Leeds Queer Film Festival in the UK, RAM has screened all over the United States (Los Angeles, Durham, Chicago, Newark, and Atlanta, to name a few) and the world, including physical screenings in Mexico, Thailand, Canada, and Australia. Among the honors the short has received are Best Animated Short at CineKink, Best Director and Best Visual Art at the Chinatown International Film Festival 国際映像, a Special Jury Prize from the Perth Queer Film Festival, and a cherished nomination for Best Stoner Short at Thailand’s Amazing Stoner Movie Fest.

I hope RAM (Like the Verb stands as a testament to what can be done to ensure that art endures, no matter the circumstances surrounding the creation.